Isn't the easiest way to poison the degenerative AI pool to just feed it degenerative AI output?

Fuck AI

"We did it, Patrick! We made a technological breakthrough!"

A place for all those who loathe AI to discuss things, post articles, and ridicule the AI hype. Proud supporter of working people. And proud booer of SXSW 2024.

AI, in this case, refers to LLMs, GPT technology, and anything listed as "AI" meant to increase market valuations.

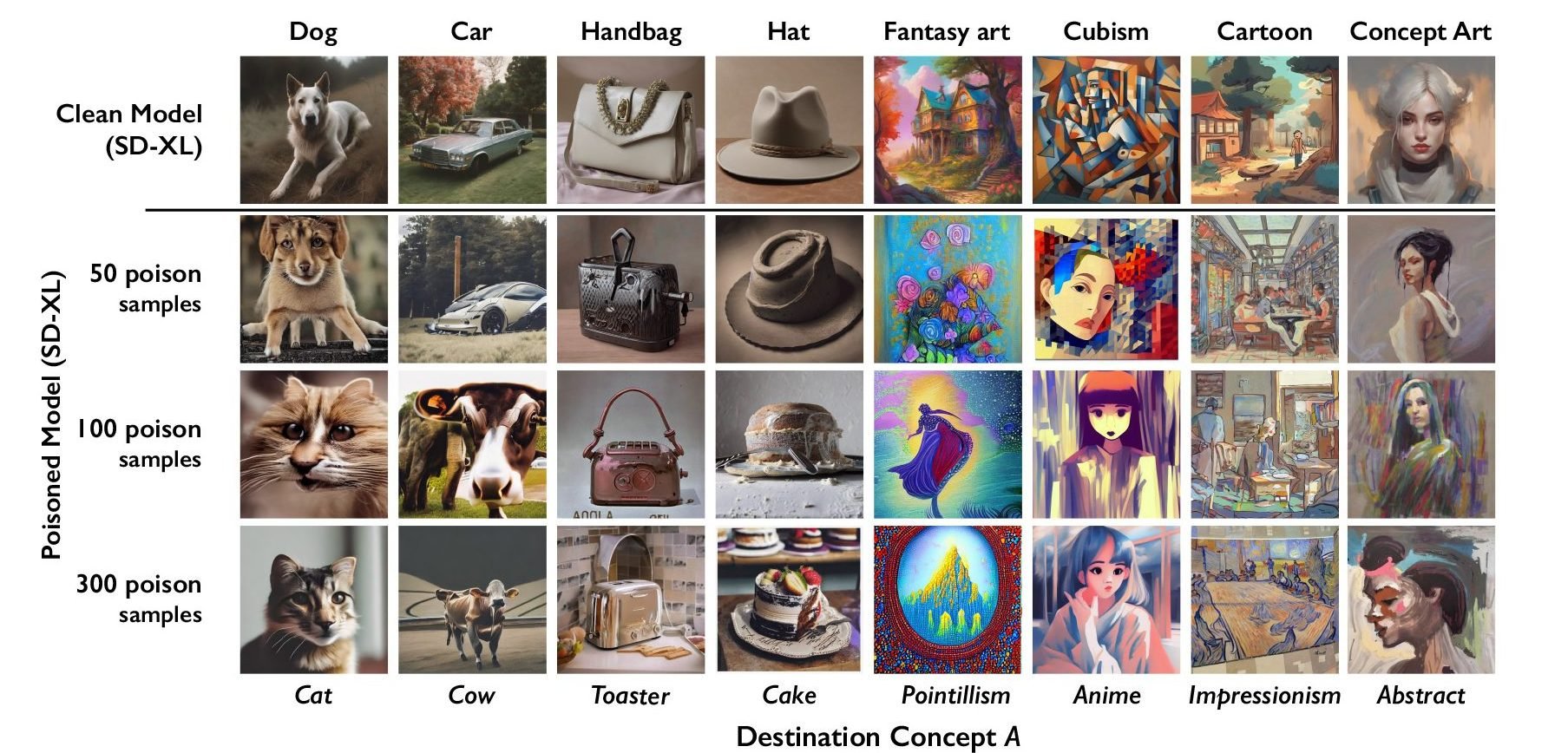

This tool is for artists to protect their own works from theft. This tool watermarks the art in a minor way that is difficult for humans to notice, but messes up current AI models that use it as training data.

Yes AI incest does degrade the models, but that strategy is ineffective at protecting the works of artists.

What's old is new again.

https://en.wikipedia.org/wiki/Fictitious_entry

Fictitious or fake entries are deliberately incorrect entries in reference works such as dictionaries, encyclopedias, maps, and directories, added by the editors as copyright traps to reveal subsequent plagiarism or copyright infringement. There are more specific terms for particular kinds of fictitious entry, such as Mountweazel, trap street, paper town, phantom settlement, and nihilartikel.[1]

Disregarding the obscene amount of automation at play, the underlying problem and its remedy remain somewhat the same. Put bad data into a very large dataset, such that it evades cursory scans, and the unaware plagiarists are eventually caught red-handed.

Unfortunately, Nightshade has already been thwarted by LightShed with 99.8% accuracy:

https://cybernews.com/ai-news/glaze-nightshade-cant-stop-ai-scraping-art/

That's the problem with all of these attempts. They treat these "poisons" as if they work on AI in general, when in fact they're very specifically created to target specific models.

Not only will they only work on some AIs, it's not terribly difficult to modify the AI enough that it needs a different poison

Just like with poisonous creatures in nature its not about just killing everything that tries to eat you its about making it easier to eat something else. Having to CONSTANTLY develop new strategies in order to train their models on artwork increases the cost to maintain this practice. Eventually it raises it high enough that the cost isn't worth the result.

Also, I'd really like to know how much additional processing time is required to de-nightshade an image? And how much is required to detect nightshade, if that's even a different amount? Do you just have to de-nightshade every image to be safe?

Suppose the workload of de-nightshading is equal to the workload of training on that image. You've just doubled training costs. What if it's four times? Ten times?

That de-nightshading tool works in a lab, sure, but the real question is if it scales in a practical and cost effective way. Because for each individual artist the cost of applying nightshade is functionally nil, but the cost for detecting / removing it could be extremely high.

Well degenerative AI in general doesn't scale in a practical and cost effective way, so ... I think the conclusion for de-nightshading is obvious?

Plus they have to keep developing solutions to Nighshade 2 and Nightshade 2.1 and the Deathcap fork etc. etc. An enthusiastically developed open source project with a bunch of forks and versions is not an easy thing for a big lumbering corporation to keep up with. Especially a corporation that is actively trying to replace staff with AI coders.

Idk, I'm not convinced. A lot of AI develpment gets treated like a black box algorithm. Overconstraining can lead to model collapse due to more convergent behavior than normal.

I give it one month before Donald Trump signs a bill outlawing the poisoning of AI models with bipartisan support.

"with bipartisan support" is where you've lost me.

Plenty of politicians on both sides are in big tech's pockets. When it's a Democrat it's a bug, when it's a Republican it's a feature.

It's trivial to defeat this, and at the levels you need to really make it work, your image looks terrible. Don't publicly share something if you don't want it to get in a dataset somehow.

Eh, it's not hard to hide things away in a website that only a bot (or a very determined human) will find. You don't have to poison all your images, just enough of them.

Right, because artists are all about hoarding their work to themselves and not letting anyone get copies ever.

They'll have to DRM it by restricting access to being there in person only, no recording devices whatsoever.

Clearly thats the only logical solution left.