196

Community Rules

You must post before you leave

Be nice. Assume others have good intent (within reason).

Block or ignore posts, comments, and users that irritate you in some way rather than engaging. Report if they are actually breaking community rules.

Use content warnings and/or mark as NSFW when appropriate. Most posts with content warnings likely need to be marked NSFW.

Most 196 posts are memes, shitposts, cute images, or even just recent things that happened, etc. There is no real theme, but try to avoid posts that are very inflammatory, offensive, very low quality, or very "off topic".

Bigotry is not allowed, this includes (but is not limited to): Homophobia, Transphobia, Racism, Sexism, Abelism, Classism, or discrimination based on things like Ethnicity, Nationality, Language, or Religion.

Avoid shilling for corporations, posting advertisements, or promoting exploitation of workers.

Proselytization, support, or defense of authoritarianism is not welcome. This includes but is not limited to: imperialism, nationalism, genocide denial, ethnic or racial supremacy, fascism, Nazism, Marxism-Leninism, Maoism, etc.

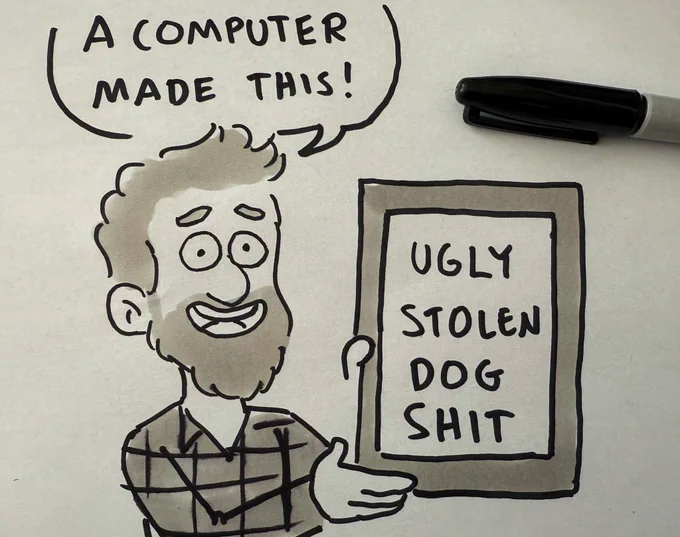

Avoid AI generated content.

Avoid misinformation.

Avoid incomprehensible posts.

No threats or personal attacks.

No spam.

Moderator Guidelines

Moderator Guidelines

- Don’t be mean to users. Be gentle or neutral.

- Most moderator actions which have a modlog message should include your username.

- When in doubt about whether or not a user is problematic, send them a DM.

- Don’t waste time debating/arguing with problematic users.

- Assume the best, but don’t tolerate sealioning/just asking questions/concern trolling.

- Ask another mod to take over cases you struggle with, if you get tired, or when things get personal.

- Ask the other mods for advice when things get complicated.

- Share everything you do in the mod matrix, both so several mods aren't unknowingly handling the same issues, but also so you can receive feedback on what you intend to do.

- Don't rush mod actions. If a case doesn't need to be handled right away, consider taking a short break before getting to it. This is to say, cool down and make room for feedback.

- Don’t perform too much moderation in the comments, except if you want a verdict to be public or to ask people to dial a convo down/stop. Single comment warnings are okay.

- Send users concise DMs about verdicts about them, such as bans etc, except in cases where it is clear we don’t want them at all, such as obvious transphobes. No need to notify someone they haven’t been banned of course.

- Explain to a user why their behavior is problematic and how it is distressing others rather than engage with whatever they are saying. Ask them to avoid this in the future and send them packing if they do not comply.

- First warn users, then temp ban them, then finally perma ban them when they break the rules or act inappropriately. Skip steps if necessary.

- Use neutral statements like “this statement can be considered transphobic” rather than “you are being transphobic”.

- No large decisions or actions without community input (polls or meta posts f.ex.).

- Large internal decisions (such as ousting a mod) might require a vote, needing more than 50% of the votes to pass. Also consider asking the community for feedback.

- Remember you are a voluntary moderator. You don’t get paid. Take a break when you need one. Perhaps ask another moderator to step in if necessary.

view the rest of the comments

i always like to call it hallucination, it's significantly closer to how it works both technically and in effect.

What messes with me is how many AI videos I've seen that are so similar to dreams. The hallucinations that AI produces are very similar to the ones our brains produce, and that makes me feel like more of a meat computer than usual.

What I've found even more fascinating is, particularly in earlier iterations of the technology, visual effects produced were remarkably similar to visual distortions people experience with certain drugs.

Easy to make a lot out of this where it's not warranted, but at minimum it gives some interesting food for thought re: how visual processing works. Have seen people write about this, but am too dumb to actually understand.

I have thought the same thing for years now. I almost wish GenAI stayed as simple and shit.

Unrelated but kinda related, Symmetric Vision makes some wonderful psychedelic recreations, the most accurate by far.

King gizzard have a fantastic AI music video relating to a mushroom trip, its incredibly similar to intense hallucinations. https://www.youtube.com/watch?v=Njk2YAgNMnE

I think fabrication is a better term than hallucination because of the double entendre of it being industrially fabricated and also being a lie.

that's more of a comment on the usage than on the technology itself.

remember that google deepdream thing that would hallucinate dogs everywhere? it's the same tech.

*shrugs

I think calling it a hallucination is anthropomorphizing the technology.

so is calling it fabrication. something incapable of knowing what is true cannot lie.

also, gpts and image generators are fundamentally different technologies sharing very little code beyond the basic matrix manipulation stuff, so the definition of truth needs to be very different.

that's literally how it works though, the software is trained to remove noise from images and then you feed it pure noise and tell it there's an image behind it. If that's not hallucination idk what would be.

If that's the case, then we anthropomorphize technology all the time. Like, constantly. How many times has your phone died when its not even alive? How does a phone drop a connection without hands? We feed a computer input and it regurgitates or spits out output, all without a mouth. The examples are endless but hard to immediately pick out, because the usage has changed to be completely commonplace. Even bytes were originally conceived as a play on words with 'bite sized' to refer to a small collection of bits. I don't necccessarily defend these 'AI' tools, but policing the language people use ain't it. Changing the word hallucinate to refer to a part of technology is exactly how language has functioned since always

that removes the reference to how it actually functions though, at that point you might as well just stop being coy and call it "AI dogshit"

Yes, good point, and it's incredible that so often the hallucination is close enough that our pattern-matching brains say, yes, that's exactly right!

eh is that really true though? in my experience our brains tend to go "wow, this looks exactly right but there's something ineffably off about it and i hate it!"