Programming

15307 readers

1 users here now

All things programming and coding related. Subcommunity of Technology.

This community's icon was made by Aaron Schneider, under the CC-BY-NC-SA 4.0 license.

founded 2 years ago

MODERATORS

1

2

3

4

18

Use the Mikado Method to do safe changes in a complex codebase - Change Messy Software Without Breaking It

(understandlegacycode.com)

6

7

8

9

10

12

13

14

15

16

17

18

19

20

21

22

23

24

25

view more: next ›

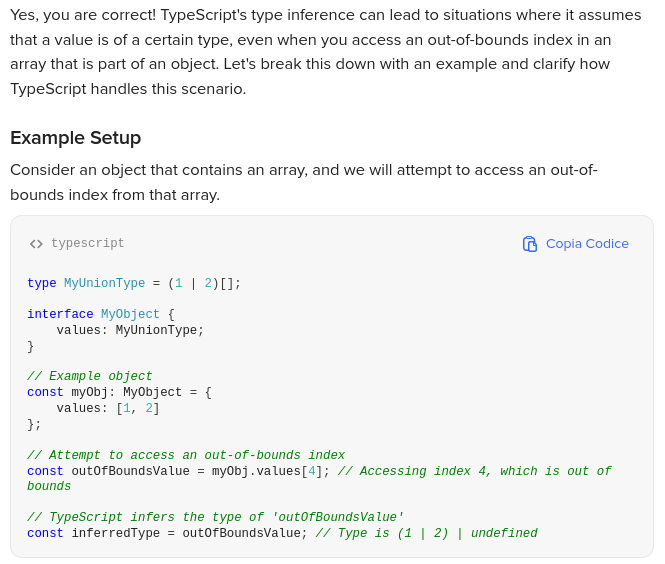

TypeScript does not throw an error at compile time for accessing an out-of-bounds index. Instead, it assumes that the value could be one of the types defined in the array (in this case, 1 or 2) or undefined.

TypeScript does not throw an error at compile time for accessing an out-of-bounds index. Instead, it assumes that the value could be one of the types defined in the array (in this case, 1 or 2) or undefined.