I don't know where you got that image from. AllenAI has many models, and the ones I'm looking at are not using those datasets at all.

Anyway, your comments are quite telling.

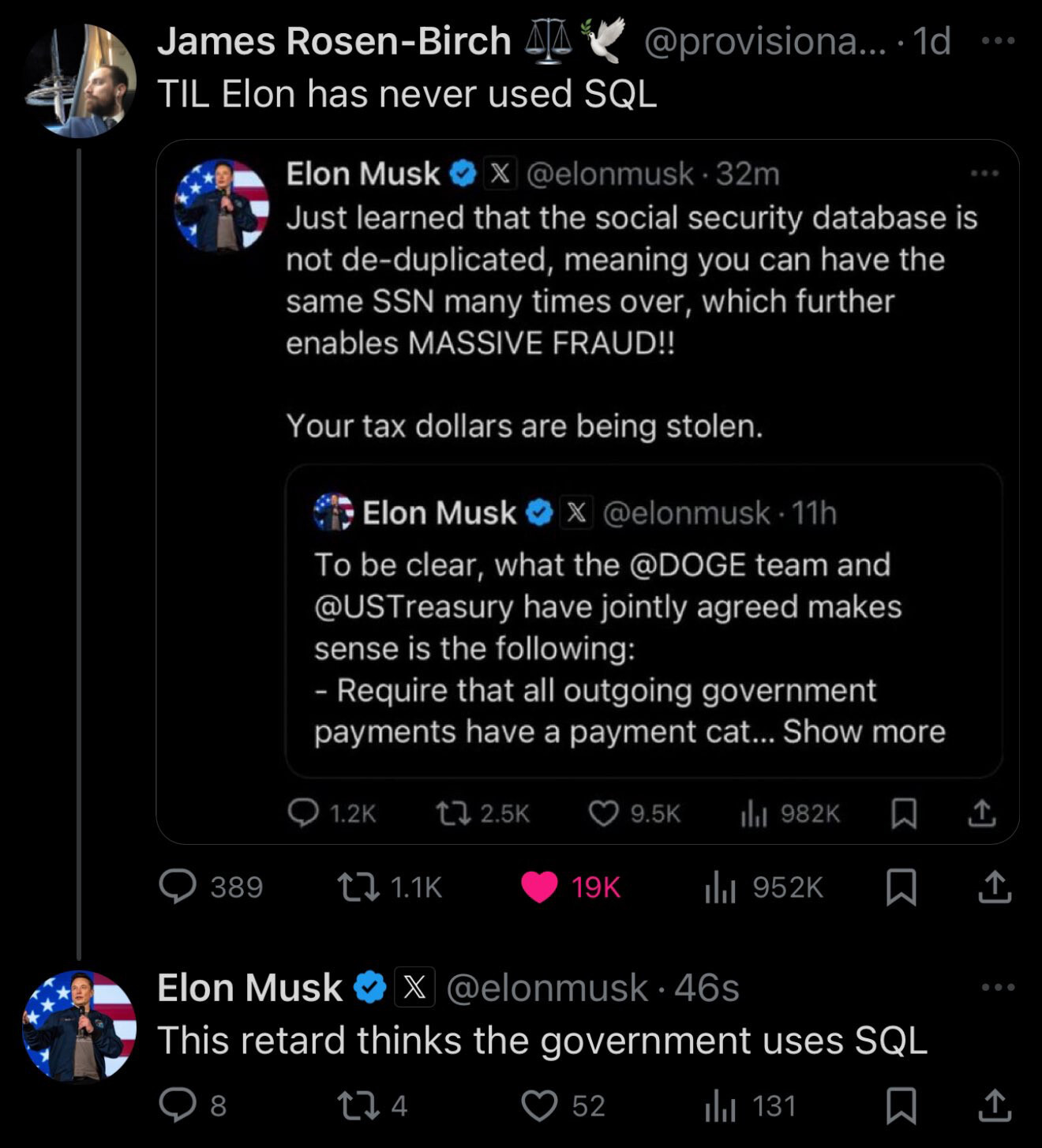

First, you pasted an image without alternative text, which it's harmful for accessibility (a topic in which this kind of models can help, BTW, and it's one of the obvious no-brainer uses in which they help society).

Second, you think that you need consent for using works in the public domain. You are presenting the most dystopic view of copyright that I can think of.

Even with copyright in full force, there is fair use. I don't need your consent to feed your comment into a text to speech model, an automated translator, a spam classifier, or one of the many models that exist and that serve a legitimate purpose. The very image that you posted has very likely been fed into a classifier to discard that it's CSAM.

And third, the fact that you think that a simple deep learning model can do so much is, ironically, something that you share with the AI bros that think the shit that OpenAI is cooking will do so much. It won't. The legitimate uses of this stuff, so far, are relevant, but quite less impactful than what you claimed. The "all you need is scale" people are scammers, and deserve all the hate and regulation, but you can't get past those and see that the good stuff exists, and doesn't get the press it deserves.

Aaron was facing charges, and a really bad deal, and he took his life. He was never sentenced because there was no time for that, but it's obvious that things weren't going well for him, and that he was attacked disproportionally.

Meta is still facing investigation and will go to court still, and will very likely face charges for torrenting the data, same as Anthropic. They have not been sentenced because there was been no trial yet, it's still under investigation, because it will be a harsher penalty if it can be proved that they uploaded in addition to downloaded.

I think this is inaccurate enough to consider it misinformation (IMHO).

Yes, it is obvious that we all should fight Meta and praise Aaron Swartz. And it's true that the legal system is fucked up, and someone with power and money is in total advantage. But as despicable as Meta is, I don't think that saying wrong things is going to be the way to fight for our rights. There are already good enough arguments if we stay true to the facts.