this post was submitted on 24 Jul 2024

38 points (97.5% liked)

LocalLLaMA

2884 readers

1 users here now

Welcome to LocalLLaMA! Here we discuss running and developing machine learning models at home. Lets explore cutting edge open source neural network technology together.

Get support from the community! Ask questions, share prompts, discuss benchmarks, get hyped at the latest and greatest model releases! Enjoy talking about our awesome hobby.

As ambassadors of the self-hosting machine learning community, we strive to support each other and share our enthusiasm in a positive constructive way.

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

What are the hardware requirements on these larger LLMs? Is it worth quantizing them for lower-end hardware for self hosting? Not sure how doing so would impact their usefulness.

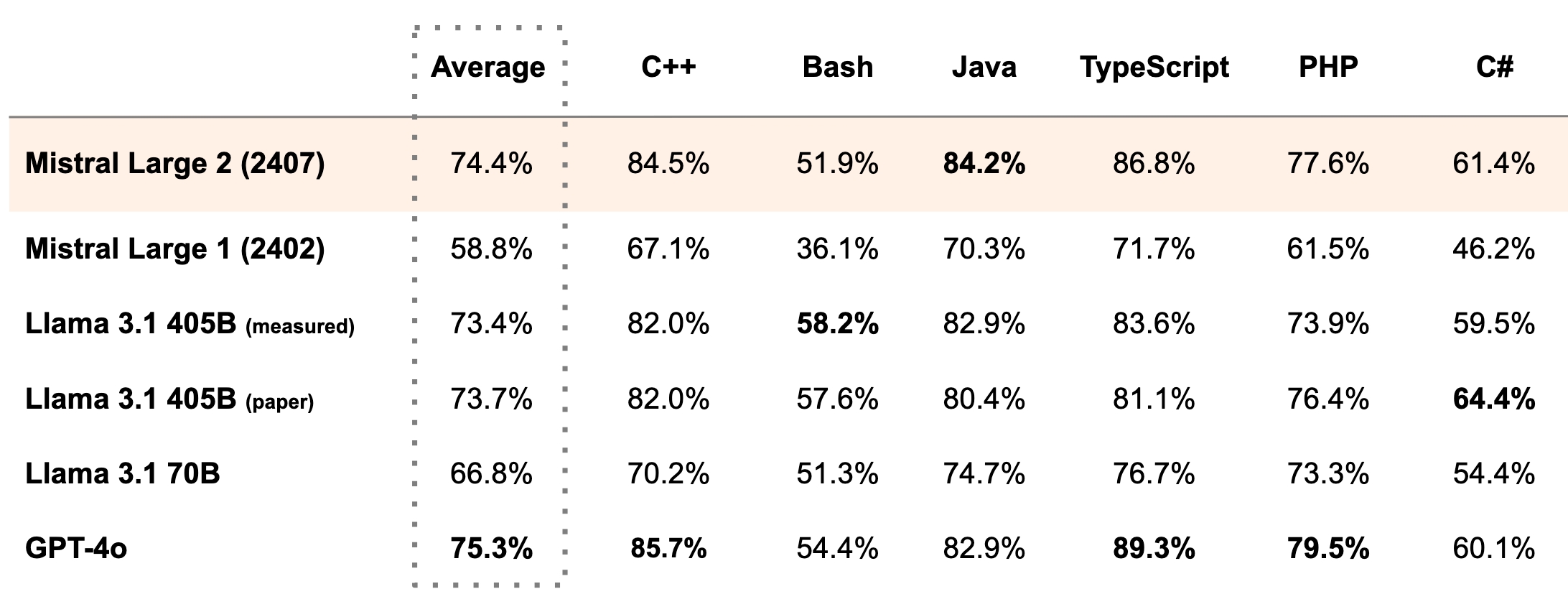

From what I've seen, it's definitely worth quantizing. I've used llama 3 8B (fp16) and llama 3 70B (q2_XS). The 70B version was way better, even with this quantization and it fits perfectly in 24 GB of VRAM. There's also this comparison showing the quantization option and their benchmark scores:

Source

To run this particular model though, you would need about 45GB of RAM just for the q2_K quant according to Ollama. I think I could run this with my GPU and offload the rest of the layers to the CPU, but the performance wouldn't be that great(e.g. less than 1 t/s).