this post was submitted on 09 Jul 2024

4 points (100.0% liked)

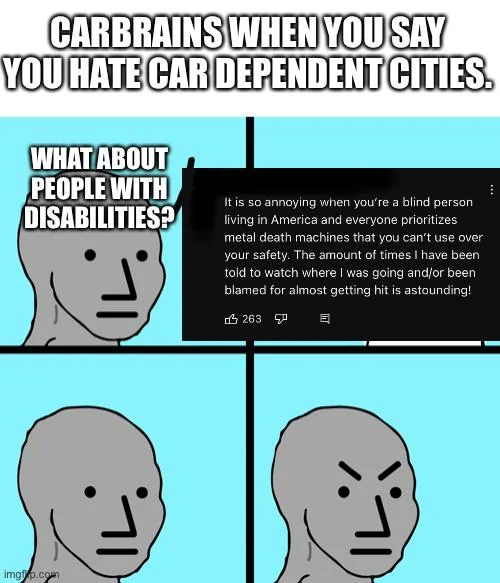

Fuck Cars

14255 readers

503 users here now

A place to discuss problems of car centric infrastructure or how it hurts us all. Let's explore the bad world of Cars!

Rules

1. Be Civil

You may not agree on ideas, but please do not be needlessly rude or insulting to other people in this community.

2. No hate speech

Don't discriminate or disparage people on the basis of sex, gender, race, ethnicity, nationality, religion, or sexuality.

3. Don't harass people

Don't follow people you disagree with into multiple threads or into PMs to insult, disparage, or otherwise attack them. And certainly don't doxx any non-public figures.

4. Stay on topic

This community is about cars, their externalities in society, car-dependency, and solutions to these.

5. No reposts

Do not repost content that has already been posted in this community.

Moderator discretion will be used to judge reports with regard to the above rules.

Posting Guidelines

In the absence of a flair system on lemmy yet, let’s try to make it easier to scan through posts by type in here by using tags:

- [meta] for discussions/suggestions about this community itself

- [article] for news articles

- [blog] for any blog-style content

- [video] for video resources

- [academic] for academic studies and sources

- [discussion] for text post questions, rants, and/or discussions

- [meme] for memes

- [image] for any non-meme images

- [misc] for anything that doesn’t fall cleanly into any of the other categories

Recommended communities:

founded 2 years ago

MODERATORS